A ceux qui auraient tendance à voir une référence à autre choses (20 % d’armée + 80 % d’air, par exemple) : Passez votre chemin !

En mathématiques, l’indicatrice d’Euler est une fonction de la théorie des nombres.

Elle intervient en mathématiques pures, à la fois en théorie des groupes, en théorie algébrique des nombres et en théorie analytique des nombres.

En mathématiques appliquées, à travers l’arithmétique modulaire, elle joue un rôle important en théorie de l’information et plus particulièrement en cryptologie.

La fonction indicatrice est aussi appelée fonction phi d’Euler ou simplement la fonction phi, car la lettre φ est communément utilisée pour la désigner.

Elle est nommée en l’honneur du mathématicien suisse Leonhard Euler (1707 – 1783) qui fut le premier à l’étudier.

Définition et calcul explicite

Définition et exemple

Plus formellement :

Par exemple :

- φ(8) = 4 car parmi les nombres de 1 à 8, seuls les quatre nombres 1, 3, 5 et 7 sont premiers avec 8,

- φ(1) = 1 car 1 est premier avec lui-même (c’est le seul entier naturel qui vérifie cette propriété, si bien que, pour tout entier n > 1, on peut remplacer m ≤ n par m < n dans la définition ci-dessus de φ(n)).

- φ(2) = 1.

Premières propriétés

Dans ce paragraphe, n désigne un entier strictement positif.

Cette propriété est démontrée dans le paragraphe Structure additive de l’article Anneau Z/nZ.

Cette propriété est démontrée dans le paragraphe Groupe des unités de l’article Anneau Z/nZ.

- Si u et v sont deux entiers strictement positifs et premiers entre eux, alors φ(u.v)=φ(u).φ(v).

Une telle fonction est dite multiplicative. On peut démontrer cette propriété à partir du théorème des restes chinois pour les groupes, selon lequel le groupe cyclique (Z/(uv)Z,+) est isomorphe au produit (Z/uZ)×(Z/vZ). Un couple (x,y) de ce groupe produit est générateur si et seulement si x est générateur de Z/uZ et y est générateur de Z/vZ. Le nombre d’éléments générateurs du groupe produit est donc égal à φ(u).φ(v). L’isomorphisme montre que cette valeur est égale au nombre d’éléments générateurs du groupe Z/(uv)Z, ce qui démontre la formule recherchée.

Calcul

La valeur de l’indicatrice d’Euler s’obtient par l’expression de n donnée par le théorème fondamental de l’arithmétique :

Dans la formule,

pi désigne un

nombre premier et

ki un entier strictement positif.

En effet, le caractère multiplicatif de l’indicatrice d’Euler et une récurrence montrent que :

Il suffit alors de dénombrer le nombre d’entiers non premiers avec une puissance d’un nombre premier et plus petit que celui-ci pour remarquer que :

![\forall i \in [1, q] \quad \varphi(p_i^{k_i})= p_i^{k_i} - p_i^{k_i - 1}=(p_i-1).p_i^{k_i-1}](http://bin.sensegates.com/s/5/9/b/59bf0f4ec96dacc674246b17b3b76b39.png)

Ce qui permet de conclure la démonstration.

Autres propriétés

Arithmétique modulaire

L’indicatrice d’Euler est une fonction essentielle de l’arithmétique modulaire, elle est à la base de résultats fondamentaux, à la fois en mathématiques pures et appliquées.

Cette propriété est une conséquence directe du calcul explicite de l’indicatrice.

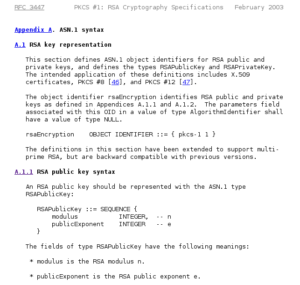

La cryptologie utilise largement cette fonction. Le code RSA se fonde sur le théorème d’Euler, indiquant que si n est un entier strictement positif et a un entier premier avec n, alors aφ(n) ≡ 1 (mod n).

Une autre branche de la théorie de l’information utilise l’indicatrice : la théorie des codes. C’est les cas des codes correcteurs, et particulièrement des codes cycliques. Ce type de code se construit à l’aide de polynôme cyclotomique et le degré du polynôme cyclotomique Φn d’indice n à coefficients dans les entiers est égal à φ(n). Plus précisément, on dispose des égalités suivantes :

La somme et le produit sont étendus à tous les diviseurs positifs

d de

n.

La formule d’inversion de Möbius permet d’inverser cette somme :

Ici, μ désigne la

fonction de Möbius usuelle définie sur l’ensemble des entiers strictement positifs, la démonstration est proposée dans l’article associé.

Théorie analytique des nombres

Les deux fonctions génératices présentées ici sont des conséquences directes du fait que :

Une série de Dirichlet utilisant  est

est

qui est dérivé depuis :

ou  est la fonction zêta de Riemann.

est la fonction zêta de Riemann.

Une série de Lambert utilisant  est

est

qui converge pour |q|<1.

dérivé de :

avec

Croissance de la fonction

La croissance de  comme une fonction de n est une question intéressante. La première impression que l’on a pour les petits n est que

comme une fonction de n est une question intéressante. La première impression que l’on a pour les petits n est que  doit être notablement plus petit que n, ce qui est quelque peu erroné. Asymptotiquement, nous avons

doit être notablement plus petit que n, ce qui est quelque peu erroné. Asymptotiquement, nous avons

pour n’importe quel  et

et  . En fait, si nous considérons

. En fait, si nous considérons

nous pouvons écrire, à partir de la formule précédente, sous forme de produit de facteurs

où les p sont des nombres premiers divisant n. Par conséquent les valeurs de n correspondantes aux valeurs particulièrement petites du rapport sont les n qui sont le produit d’un segment initial de la suite de tous les nombres premiers. À partir du théorème des nombres premiers il peut être montré qu’une constante ε dans la formule précédente peut par conséquent être remplacée par

.

.

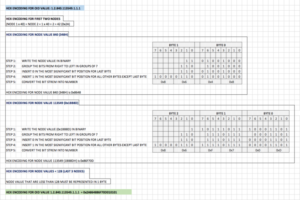

Les 99 premières valeurs de la fonction φ

Les 100 premières valeurs de la fonction φ

|

+0 |

+1 |

+2 |

+3 |

+4 |

+5 |

+6 |

+7 |

+8 |

+9 |

| 0+ |

|

1 |

1 |

2 |

2 |

4 |

2 |

6 |

4 |

6 |

| 10+ |

4 |

10 |

4 |

12 |

6 |

8 |

8 |

16 |

6 |

18 |

| 20+ |

8 |

12 |

10 |

22 |

8 |

20 |

12 |

18 |

12 |

28 |

| 30+ |

8 |

30 |

16 |

20 |

16 |

24 |

12 |

36 |

18 |

24 |

| 40+ |

16 |

40 |

12 |

42 |

20 |

24 |

22 |

46 |

16 |

42 |

| 50+ |

20 |

32 |

24 |

52 |

18 |

40 |

24 |

36 |

28 |

58 |

| 60+ |

16 |

60 |

30 |

36 |

32 |

48 |

20 |

66 |

32 |

44 |

| 70+ |

24 |

70 |

24 |

72 |

36 |

40 |

36 |

60 |

24 |

78 |

| 80+ |

32 |

54 |

40 |

82 |

24 |

64 |

42 |

56 |

40 |

88 |

| 90+ |

24 |

72 |

44 |

60 |

46 |

72 |

32 |

96 |

42 |

60 |

On observe que, excepté pour n = 1 ou 2,  est pair, propriété qui est générale. En effet, en notant

est pair, propriété qui est générale. En effet, en notant  avec les

avec les  impairs et q éventuellement nul (produit vide), on a :

impairs et q éventuellement nul (produit vide), on a :

Or si

n > 2, alors

k > 1 ou

q > 0. Dans un cas comme dans l’autre, on obtient bien que

est pair.

Autres formules impliquant la fonction φ d’Euler

pour

pour

pour

pour

Inégalités

Certaines inégalités impliquant la fonction  sont :

sont :

pour n > 2, où

pour n > 2, où  est la constante d’Euler,

est la constante d’Euler,

pour n > 0,

pour n > 0,

et

pour n > 6.

pour n > 6.

Pour un nombre premier n, clairement  . Pour un nombre composé n, nous avons

. Pour un nombre composé n, nous avons

Pour tous les  :

:

Pour un grand n aléatoire, ces bornes ne peuvent pas être encore améliorées, en effet :

Une paire d’inégalités combinant la fonction  et la fonction diviseur

et la fonction diviseur  sont :

sont :

Conjectures

Voir aussi

![\forall i \in [1, q] \quad \varphi(p_i^{k_i})= p_i^{k_i} - p_i^{k_i - 1}=(p_i-1).p_i^{k_i-1}](http://bin.sensegates.com/s/5/9/b/59bf0f4ec96dacc674246b17b3b76b39.png)

est

est

est la

est la

et

et  . En fait, si nous considérons

. En fait, si nous considérons

.

.

avec les

avec les  impairs et q éventuellement nul (produit vide), on a :

impairs et q éventuellement nul (produit vide), on a :

pour

pour

pour

pour

pour n > 2, où

pour n > 2, où  est la

est la  pour n > 0,

pour n > 0, pour n > 6.

pour n > 6. . Pour un

. Pour un

:

:

et la

et la  sont :

sont :

est premier si et seulement si

est premier si et seulement si  (énoncée par

(énoncée par  (c’est la «

(c’est la «